In a previous entry, How many watts do you need?, I discussed how transmitter power affects the received signal, and touched on the concept of the SNR, Signal to Noise Ratio. Seeing numbers expressed in dB is one thing, but actually hearing the difference between a station with an SNR of 10 dB and one of 20 dB is far more enlightening.

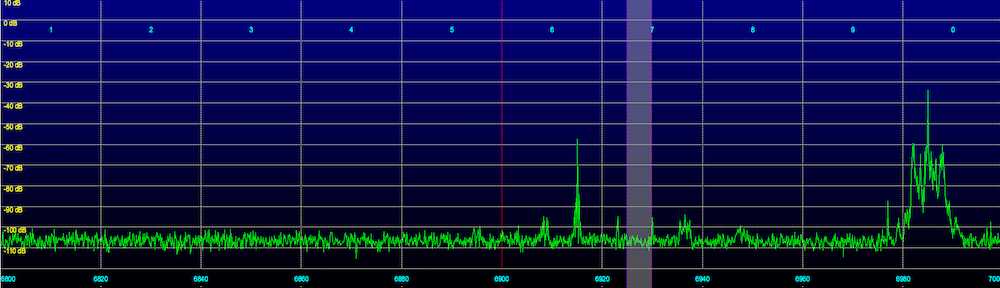

I created some simulated Signal to Noise Radio recordings. They were produced by mixing a relatively constant noise signal (actual static RF from a Software Defined Radio connected to an antenna) with a software generated AM modulated signal. One difference between these recordings and an actual station is that there is no fading, so real world conditions are likely to be somewhat worse, depending on the amount of fading the station is experiencing.

I’ve produced five recordings, with SNR’s of 0, 6, 10, 20 and 40 dB. A SNR of 0 dB means that the signal and noise levels are exactly the same. This is essentially the weakest signal that you could possibly receive. On the other hand, an SNR of 40 dB represents excellent reception conditions, say that of a local high powered MW station. The others obviously fall in between.

Remember that every 6 dB (voltage) of SNR is equivalent to 6 dB more signal (with the noise level held constant), in other words, doubling the transmitter power. Conversely, a drop of 6 dB is the same as cutting the transmitter power in half.

Let’s make up a crude example. A very strong pirate signal may have an SNR of 30 dB, somewhat weaker than a local station. Going from 30 dB to 10 dB, or 20 dB, is a change in transmitter power of a factor of 10 times. Going, for example, from 200 watt transmitter to a 20 watt transmitter. A 10 watt transmitter, half the power, would be 6 dB lower, or around 4 dB. It would be slightly weaker than the 6 dB simulated recording below.

Listen to the simulated recordings below to see the effects of various Signal to Noise Ratios:

0 dB Signal to Noise Radio (SNR)

6 dB Signal to Noise Radio (SNR)

10 dB Signal to Noise Radio (SNR)

20 dB Signal to Noise Radio (SNR)

40 dB Signal to Noise Radio (SNR)

Interesting comparisons. Most of my reception of pirate radio sounds like the 0 dB SNR example. And most of my IDs of those stations are based on comparisons with familiar material I’ve heard before, or comparing notes with other DXers. Eking out an ID without any help out outside references would depend heavily on luck and using noise/hiss reduction and EQ tweaking on the recordings.

To get an independent station ID, with no help/cheating, I’d need reception comparable to at least the 6 dB sample, without fade or QRN during the ID.

Some SSB stations make it above the 20 dB range (especially WTCR, Wolverine, and, more recently , some XFM shows), but I’ve never heard an AM pirate station on my own receiver/antenna that sounded better than approx. 6-10 dB, with lots of fading; although I have heard a few sounding more like the 20 dB sample via remote tuners closer to the East coast with good outdoor antennas.

Pingback: SSB vs AM | HFUnderpants.com

Pingback: A comparison of three low power AM shortwave pirate transmitters | HFUnderpants.com

Pingback: Signal Levels of Radio True North’s May 14th Transmission on 6950 kHz | HFUnderpants.com

Your power calculations are off.

6 dB more signal is equivalent to quadrupling the power. A drop of 6 dB is the same as cutting the transmitter power in half twice, to one-quarter.

20 dB is a change in power by a factor of 100; say from 200 watts to 2 watts. And a 1 watt transmitter, half the power, is only a 3 dB change.

Hearing them for real is very enlightening!

Great article. And posting those audio examples of the SNR signals really helped me make sense of those readouts on my new shortwave radio.

Thanks!